The Psychology Behind Moral Dilemmas

The Tug-of-War Within: Unraveling the Psychology of Moral Dilemmas

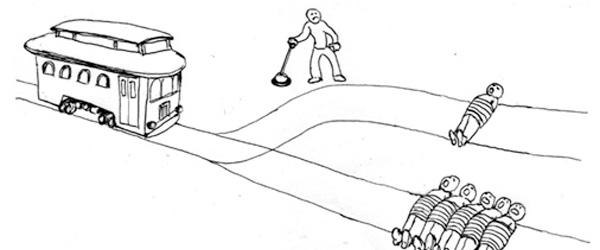

Picture this: a runaway trolley is hurtling down the tracks, and you're standing at a lever. Ahead, five people are tied up and unable to move. You have the power to switch the trolley to a different track, but there's a catch – there is one person on that alternate track. Do you pull the lever, actively causing the death of one to save five? Or do you do nothing, allowing the five to perish through inaction? This is the classic "trolley problem," a thought experiment that has captivated philosophers and psychologists for decades. It's a stark and unsettling scenario, but it perfectly encapsulates the heart of a moral dilemma: a situation where our deeply held values clash, and any choice we make feels, in some way, wrong.

These aren't just abstract puzzles. We face miniature moral dilemmas every day. Do you tell a white lie to spare a friend's feelings? Do you report a coworker for a minor infraction? Do you buy from a company with questionable ethical practices? Understanding the psychological forces at play when we grapple with these questions reveals a fascinating and complex inner world, a constant tug-of-war between our rational minds and our emotional cores.

The Two Voices in Our Heads: Reason vs. Emotion

For centuries, philosophers have debated the primary driver of our moral choices. In the 18th century, David Hume famously argued that "reason is, and ought only to be the slave of the passions," suggesting our feelings are the true motivators of moral action. Modern psychology, particularly through what's known as dual-process theory, has lent scientific weight to this idea. This theory posits that we have two competing cognitive systems for making moral judgments.

System 1: The Gut Reaction

This is our fast, intuitive, and emotionally-driven system. It's the gut feeling that tells you pushing a person off a bridge to stop a trolley feels inherently wrong, even if the outcome (saving five lives) is the same as pulling a lever. This system is rooted in our evolutionary history, where quick, instinctual responses were crucial for survival and social cohesion. These automatic emotional processes often support what are known as deontological judgments – decisions based on moral rules and duties, like "thou shalt not kill," regardless of the consequences.

System 2: The Deliberative Thinker

This is our slower, more conscious, and analytical system. It's the part of our brain that can weigh the pros and cons, calculate outcomes, and apply abstract principles. When you start thinking, "saving five lives is better than saving one," you're engaging System 2. This system is associated with utilitarian or consequentialist judgments, which prioritize the action that will produce the greatest good for the greatest number of people.

Most people's responses to the trolley problem illustrate this dual-process conflict. In the initial scenario, about 90% of people say they would flip the switch, a utilitarian choice. However, in a variation where you have to physically push a large person onto the tracks to stop the trolley, most people say it would be wrong, a deontological response driven by a strong emotional aversion to direct, personal harm.

The Moral Brain: Mapping Our Ethical Compass

Thanks to advances in neuroscience, we can now peek inside the brain as it wrestles with these dilemmas. Using functional magnetic resonance imaging (fMRI), scientists have identified a network of brain regions that become active during moral decision-making. This isn't one single "morality center," but rather a complex interplay between different areas.

Key players in the moral brain include:

- The Ventromedial Prefrontal Cortex (vmPFC): This area is crucial for processing emotions and is heavily involved in our intuitive, System 1 responses. It's the hub that generates feelings of empathy, guilt, and fairness.

- The Dorsolateral Prefrontal Cortex (dlPFC): This region is associated with cognitive control, reasoning, and deliberation – the hallmarks of System 2. It helps us override our initial emotional responses and think through the consequences of our actions.

- The Amygdala: This is our brain's alarm system, responsible for processing salient and emotionally charged stimuli, like the distress of others.

- The Temporoparietal Junction (TPJ) and Posterior Superior Temporal Sulcus (pSTS): These areas are central to "Theory of Mind," our ability to understand the intentions, beliefs, and feelings of others, which is a critical component of moral judgment.

When we face a personal moral dilemma, like the footbridge scenario, the emotionally-charged vmPFC and amygdala light up. When the dilemma is more impersonal, like flipping a switch, the more calculating dlPFC takes the lead. This neural evidence provides a biological basis for the dual-process theory, showing how our brains are literally wired for this internal conflict between feeling and logic.

Quick Facts

- The term "trolley problem" was coined by philosopher Judith Jarvis Thomson in 1976, building on an earlier thought experiment by Philippa Foot from 1967.

- Research on people with damage to the ventromedial prefrontal cortex (vmPFC) shows they are more likely to make utilitarian judgments in emotionally charged dilemmas, suggesting their capacity for emotional response is diminished.

- Neurochemicals like serotonin have been shown to influence moral judgment by enhancing the negative feelings we experience when witnessing harm to others.

- The doctrine of double effect, often credited to Thomas Aquinas, is a philosophical principle that distinguishes between intended and foreseen consequences, which is relevant to many moral dilemmas.

The Role of Empathy: Feeling Our Way to Morality

It's impossible to discuss morality without talking about empathy – our ability to understand and share the feelings of others. Empathy is often considered a cornerstone of moral development, the emotional spark that motivates us to care about the welfare of others. It's what makes the thought of pushing someone to their death so repellent; we vicariously experience their fear and pain.

Research has shown a strong link between empathy and non-utilitarian moral judgments. People with higher levels of empathic concern—feelings of warmth and compassion for others in distress—are less likely to endorse harming one person to save many. This suggests that our emotional connection to the potential victim can override a purely rational calculation of outcomes.

However, empathy isn't a perfect moral guide. It can be biased. We tend to feel more empathy for people who are similar to us or part of our "in-group." This can lead to moral failings when we need to make decisions involving those we perceive as "other." Acknowledging this bias is crucial for developing a more inclusive and just moral framework.

From the Cradle to Adulthood: The Development of Moral Reasoning

Our moral compass isn't something we're born with fully formed; it develops and becomes more sophisticated over time. Two giants in developmental psychology, Jean Piaget and Lawrence Kohlberg, provided foundational theories on how our moral reasoning evolves.

Piaget's Stages of Moral Development

Jean Piaget, through observing children playing games, proposed a two-stage theory of moral development.

- Heteronomous Morality (roughly ages 5-10): In this stage, children see rules as rigid and absolute, handed down by authority figures like parents and teachers. Their judgment of wrongdoing is based on the consequences of an action, not the intention behind it.

- Autonomous Morality (roughly age 10+): As children get older and interact more with peers, they begin to understand that rules are social agreements that can be changed. They recognize that the intention behind an action is more important than its outcome.

Kohlberg's Six Stages

Lawrence Kohlberg expanded on Piaget's work, proposing a more detailed, six-stage theory based on responses to moral dilemmas, most famously the "Heinz dilemma" (should a man steal a drug he cannot afford to save his wife's life?). These stages are grouped into three levels:

- Level 1: Pre-Conventional Morality. Here, morality is self-focused. Stage 1 is about obedience and avoiding punishment. Stage 2 is about self-interest and getting rewards.

- Level 2: Conventional Morality. At this level, morality is centered on social norms. Stage 3 involves conforming to be seen as a "good person." Stage 4 is about maintaining social order and obeying laws.

- Level 3: Post-Conventional Morality. Here, individuals develop their own abstract principles of justice. Stage 5 is about the social contract and individual rights. Stage 6 is about universal ethical principles, where one's conscience guides their actions.

While influential, Kohlberg's theory has been criticized for focusing too much on abstract justice and potentially being biased towards Western, individualistic cultures. Nevertheless, these theories highlight that our approach to moral dilemmas is not static; it's a skill that we develop and refine throughout our lives.

The Cultural Lens: How Society Shapes Our Choices

Morality is not just an individual affair; it is deeply embedded in our culture. The values, norms, and social institutions we grow up with provide the framework for our moral reasoning. What is considered a moral imperative in one culture might be a minor issue in another.

For example, many Western societies prioritize individualism, emphasizing personal rights and freedoms. In contrast, many Eastern cultures are more collectivist, placing a greater value on community harmony and one's duties to the group. These differing cultural lenses can lead to different conclusions in moral dilemmas. Someone from a collectivist culture might be more inclined to sacrifice an individual for the good of the group, a decision that might be more difficult for someone from an individualistic culture.

Research has shown that people from collectivist cultures, like Japan, are less likely to protect a close friend or family member who has committed a crime compared to people from individualistic cultures like the United States, prioritizing the impact on society over personal loyalty.

Conclusion: The Never-Ending Conversation

The runaway trolley, which we started with, has no single "right" answer. That's the point. It, and other moral dilemmas, serve as a mirror, reflecting the complex and often contradictory architecture of our moral minds. They reveal the deep-seated tension between our immediate, emotional reactions and our more considered, rational calculations. They show us that our choices are not made in a vacuum but are shaped by our biology, our upbringing, and the cultural water we swim in.

The psychology behind moral dilemmas teaches us that being conflicted is not a sign of weakness but a fundamental part of the human moral experience. It's the product of a brain that evolved both to feel and to think, to connect with others on an emotional level and to reason about abstract principles. By understanding this internal tug-of-war, we can become more mindful of our own decision-making processes, appreciate the different perspectives of others, and engage in the never-ending conversation about what it means to do the right thing.